How the end of Moore's Law will usher in a new era in computing

In 1965 Gordon Moore, the founder of Intel, predicted that the number of components that could fit on a microchip would double every year for the next decade.

Moore revised his prediction in 1975 to a doubling of components every two years - a prophecy that remained true for another four decades.

The ramifications on the world of technology and, by extension, society itself of what is now known as ‘Moore’s Law’ have proven immeasurable.

The doubling of transistors - semi-conductor devices that switch electronic signals and power - meant that technology would become exponentially more powerful, smaller and cheaper.

But over the past few years, Moore’s Law has begun to reach its natural end as we squeeze every nanometer of advancement out of silicon chips.

Transistors are now getting too small to manufacture efficiently. Intel itself delayed the release of its 10 nanometer - or a millionth of a millimeter - chip in 2016 to earlier this year, as it struggled to keep the chips functioning, while its 7nm successor is on track for 2021.

By the mid-2020s it is believed that the Law will have plateaued completely as production costs increase and transistors reach their physical limits.It is predicted that the machines needed to produce such bewilderingly tiny components will cost $10bn.

“Below 20nm transistors cease to get more efficient or more cost-effective as you continue to shrink them,” says Stephen Furber, Professor of Computer Engineering at the University of Manchester. “Sure, they get smaller, and you can fit more on a chip, but the other historic benefits of shrinkage no longer apply.”

With Moore’s Law effectively becoming economically unsound, the technology industry will need to become more creative without an established blueprint to follow. As a result, a new era in computing could follow.

“This may not be noticeable for some time because hardware design has not been efficient because Moore’s law has given manufacturers a free ride,” says Noel Sharkey, Professor of AI at Sheffield University.

“The task now will be to take hardware up to as close to 100pc efficiency over the coming decade and that will keep processing power moving along. After that, it is anyone’s guess.”

A computer brain

Some experts believe the next step is to use chips inspired by the human brain, building ‘artificial neural networks’ to accelerate artificial intelligence. Such systems can learn without being programmed with set tasks, using connecting artificial neurons based on their biological equivalent and fired by connections similar to synapses in the brain.

“The explosive developments over the last 15 years in machine learning and AI have been paralleled by developments in more brain-inspired approaches; neuromorphics,” says Furber.

“Although developed primarily for brain science, there is growing interest in commercial applications of neuromorphics, though nothing compelling yet. If and when there is a breakthrough in our understanding of how the brain works, this should unleash another huge leap forward in machine learning and AI.”

With big tech companies like Google, Microsoft and Facebook increasingly using AI solutions and algorithms, neural network processors (NNPs) are a big new market for chip producers.

American company Nvidia, best known for its development of video game graphic cards, announced its $2bn Tesla 100P chip in 2016 that puts more power into deep learning.

And Intel demonstrated its own NNP last month, with Naveen Rao, corporate vice president and general manager of the Artificial Intelligence Products Group at Intel, saying that he expects the company’s AI solutions to have generated $3.5bn in revenue this year. I

Perhaps more presciently, Rao also claimed that neural network models are becoming ten times more complex each year, growing at an exponential rate greater than any technology transition he is aware of.

Quantum supremacy?

Further away, but potentially more broadly disruptive for the computing industry is the advent of quantum computing. Whereas traditional computing operates in binary, storing data as electrical signals in two states (1s and 0s), quantum computing is able to access data in a variety of states simultaneously in memory units called qubits. Companies around the world, including in the UK, are feverishly working on methods to successfully build the new frontier of tech.

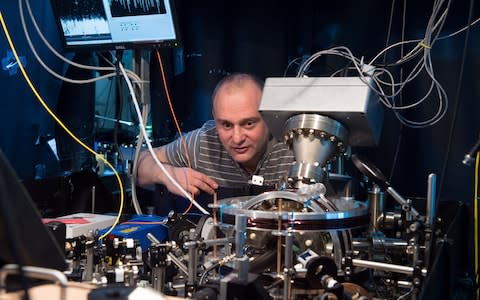

“There are numerous ways to build quantum computers,” says Winfried Hensinger, the Professor of Quantum Technologies at the University of Sussex who is working on a quantum computer with company Universal Quantum.

“We are using silicon microchips that host arrays of electrodes. These electrodes emit electric fields that can trap individual charged atoms just above the surface of the microchip. Each atom forms a quantum bit.”

Currently, quantum computing is in its infancy, with no one yet to build a quantum computer that can outperform a traditional supercomputer. But so-called ‘quantum supremacy’ is considered one of the holy grails of science and could lead to previously unimaginable computing speeds. The director of Google’s Quantum AI Labs, Hartmut Neven, has even proposed a new rule to supersede Moore’s Law.

Neven claims that quantum computing is growing at a ‘doubly exponential’ rate to traditional computing. If that pace applied to conventional computing, so the theory goes, we would all have had smartphones in our pockets by the 1980s.

Hensinger says that quantum computers could be used for tackling some of the most interesting problems, “creating new pharmaceuticals, understanding protein folding in order to find a cure for dementia or ground-breaking applications for the financial sector”.

Earlier this year, Google claimed that it had achieved quantum supremacy when its 54-qubit ‘Sycamore’ computer was able to perform a calculation in 200 seconds that would have taken the world’s most powerful supercomputer 10,000 years. Quantum competitor IBM refuted the claims, however, arguing that a classical system could achieve the results ‘with higher fidelity’ in just 2.5 days.

Head in the clouds, but what does it mean for us?

What does the end of Moore’s Law mean for the average consumer? In the short-term, probably not a lot. Most consumer devices use chips that are a few generations behind the most cutting-edge technology. And with Moore’s Law set to apply for another five years, the supercomputer in your pocket is likely to be able to improve for a while yet.

“The end-user will continue to see progress for some time,” says Furber. “Hardware stagnation creates an opportunity for the software to catch up, and there is at least another decade of progress available from improvements in software along with AI accelerators.”

Cloud computing is another way that the advancements of processing power could continue even if Moore’s Law is coming to an end. With swathes of tasks performed by data-centers with stacks of computing power, the microchips in your local hardware will become less important to a device’s capability.

But even those sprawling data-centers will have finite room for improvement if Moore’s Law came to an end. The big tech owners that provide much of the world’s cloud infrastructure - Amazon, Microsoft, Google - are the first customers to buy into a new generation of microprocessors.

Some even believe that Moore’s Law has held back computing development in recent years and that’s its accepted end will be a new dawn for innovation in technology.

“Moore’s ‘law’ came to an end over 20 years ago,” says David May, Professor of Computer Science at Bristol University and lead architect of the influential ‘transputer’ chip. “Only the massive growth of the PC market then the smartphone market made it possible to invest enough to sustain it.

“There’s now an opportunity for new approaches both to software and to processor architecture. And there are plenty of ideas - some of them have been waiting for 25 years. This presents a great opportunity for innovators - and for investors.”

Yahoo Finance

Yahoo Finance