Google launches Bard chatbot amid ‘misleading or false information’ fears

Google has admitted its Bard chatbot can still give “misleading or false information” as it launched the artificial intelligence (AI) engine to the public.

The US technology giant said on Tuesday it would start letting people in the US and UK use Bard as “an early experiment” with the technology.

Bard is an attempt to compete with ChatGPT, the wildly popular Microsoft-backed chatbot launched in November.

People can ask both ChatGPT and Bard complex questions and they will answer in a conversational style, drawing from online information.

However, the software cannot tell whether information is accurate and the technology has been found to regurgitate wrong information.

The company wrote in a blog post: “While LLMs [large language models, the technical name for the AI] are an exciting technology, they’re not without their faults… they can provide inaccurate, misleading or false information while presenting it confidently.

“For example, when asked to share a couple suggestions for easy indoor plants, Bard convincingly presented ideas…but it got some things wrong, like the scientific name for the ZZ plant.”

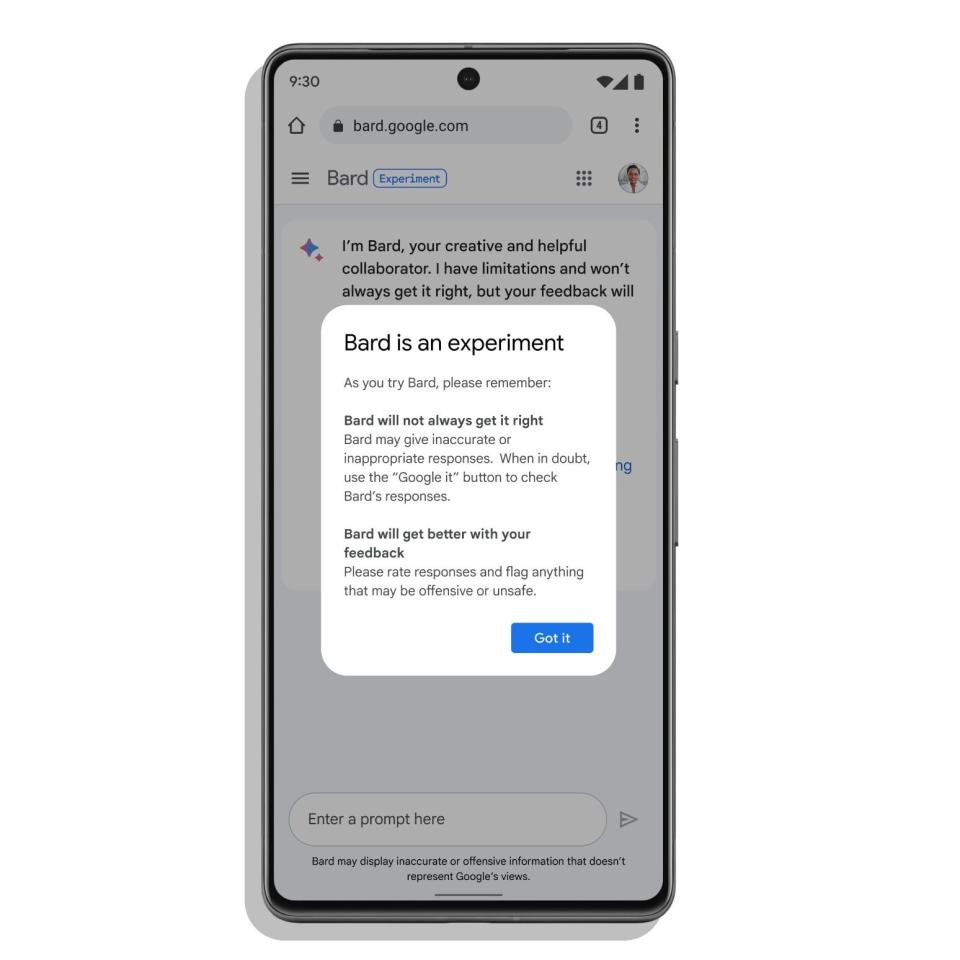

The relaunched AI chatbot will come with a health warning, informing users that Bard may “display inaccurate or offensive information” with every answer.

The release of Bard comes after the tech giant's botched launch last month, where the tool displayed an inaccurate answer about the James Webb Telescope.

Bard falsely claimed the telescope had taken the first picture of a planet outside of the solar system.

Zoubin Ghahramani, a Cambridge professor and vice president of research at Google, said: “This is an experiment and there are limitations.”

Responses from Bard will be called “drafts” and the chatbot will provide three potential answers to each question.

Jack Krawczyk, a senior director at Google, said: “We are very deliberate in using the word draft, because it implies it is incomplete.”

ChatGPT has forced rivals such as Google to jumpstart their own AI programs. Google labelled ChatGPT’s launch a “code red” threat to its search engine dominance.

OpenAI, the Silicon Valley start-up behind ChatGPT, has already signed a multibillion dollar deal with Microsoft to build its technology into its Bing search engine.

Despite the viral success of ChatGPT, it too has been found to display misinformation and nonsense. With a little prompting, the bots could be tricked into apparently breaking built-in rules, declaring their love or feelings for users.

Google’s Bard chatbot will be opened up to users in the UK and the US starting today, but subjected to a set of strict guardrails as Google attempts to stop its technology delivering awkward or offensive answers.

The AI will try to avoid giving views on controversial topics.

Bard has been trained on millions of lines of text, online books, articles and encyclopaedias. Its software then uses this to provide human-like answers to questions and prompts.

Google’s AI leaders stressed Bard was designed to be used to provide “creative” prompts, with human users making use of the tool to “collaborate with generative AI”.

Yahoo Finance

Yahoo Finance